The Pro-Human AI Declaration

March 2026

The Pro-Human AI Declaration

New Poll: Americans Overwhelmingly Support Pro-Human Principles on AI

A new national survey of 1,004 likely U.S. voters (February 19-20, 2026) reveals where Americans stand on AI development and the principles that should guide it.

The findings show that voters overwhelmingly prefer a future that is pro-human in nature, and reject the AI race focused on human replacement.

Respondents were presented with pairs of opposing statements about the trajectory of AI and asked which they prefer. The results were remarkably clear: across the political spectrum voters reject the current paradigm of rapid, lightly regulated AI development. Instead they want humans to remain firmly in control, children protected from manipulative AI systems, and companies held legally accountable when AI causes harm.

These results come as policymakers grapple with how to govern increasingly powerful AI systems, and as leading AI companies push toward what they call "artificial general intelligence."

Below we summarize the key findings of this report. Click here to view the toplines.

Highlights

- 80% of voters support keeping humans in charge of AI, with strong oversight, clear limits, and corporate accountability—versus just 10% who favor fast, lightly regulated development.

- 77% believe AI must stay under human control, with people deciding what to delegate and retaining the ability to stop systems when needed—versus just 11% who prefer giving AI more independence for speed and scale.

- 69% want to prevent AI monopolies and ensure benefits are shared broadly, not captured by a small group—versus 16% who believe concentration is natural and policy shouldn't punish size with antitrust or forced sharing.

- 73% support protecting children and families from AI systems designed to create emotional attachment or dependency—versus 15% who feel AI should be allowed to serve as tutors, coaches, or companions without strict limits.

- 72% believe AI companies should bear legal responsibility for harms, with clear safety standards and real oversight—versus 15% who want accountability focused narrowly on negligence and fraud, with light oversight and safe harbors.

- 69% agree that superintelligence should be prohibited until there is broad scientific consensus it can be developed safely and controllably—versus just 9% who disagree.

Respondents were presented with competing framings of AI governance—one emphasizing human control and accountability, the other emphasizing speed and minimal regulation—and asked to choose.

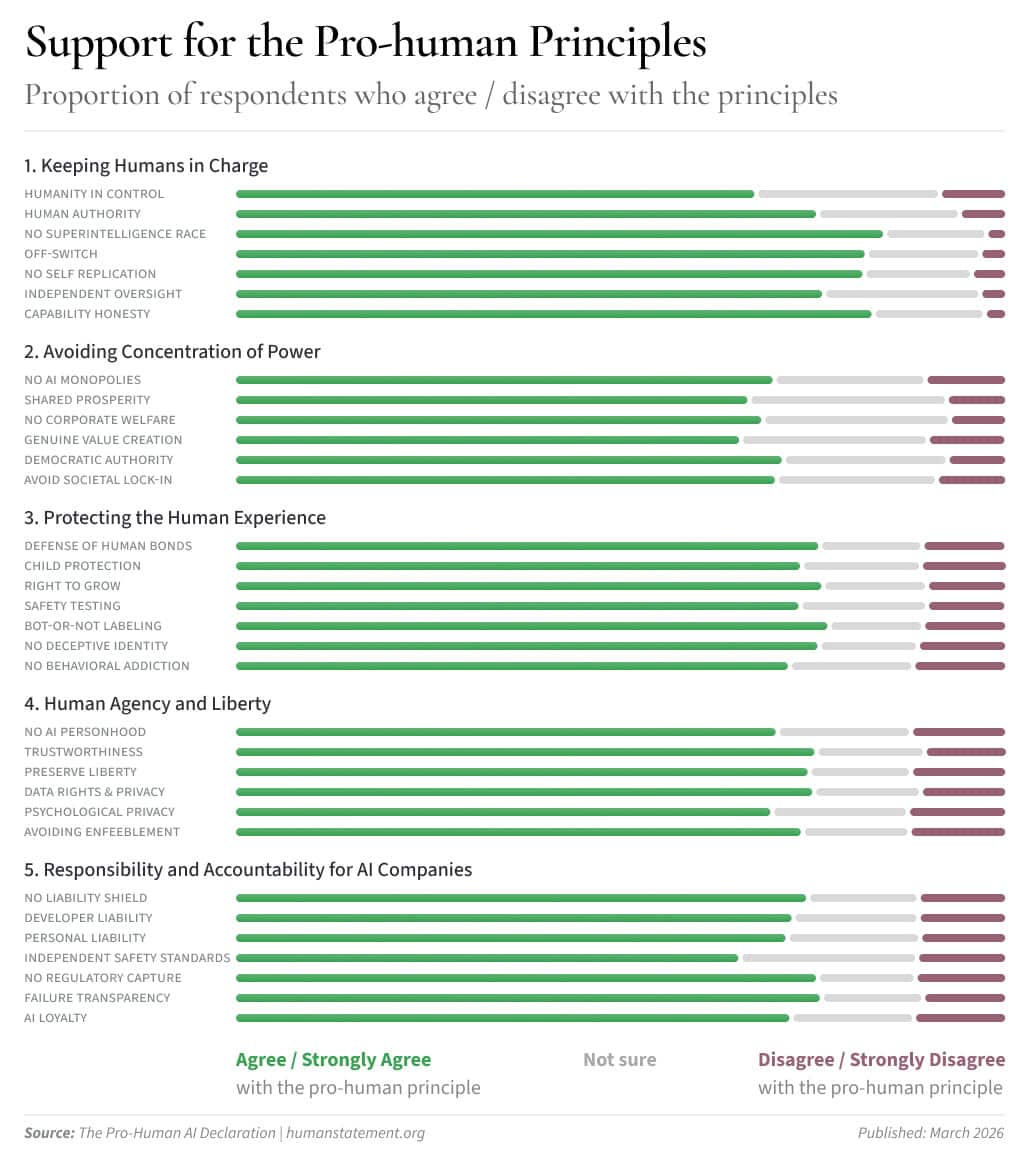

They were also asked to rate their agreement with specific principles across five domains: human control, concentration of power, protecting human experience, human agency and liberty, and corporate accountability.

Bipartisan consensus on AI principles

Notably, these findings cut across partisan lines. The survey sample was weighted to reflect the 2024 electorate, with roughly equal numbers of Trump and Harris voters represented.

The pattern is consistent: while Harris voters show slightly stronger support for oversight and accountability, Trump voters still favor these positions by large margins.

On no issue tested did a majority of either partisan group choose the "fast development, minimal regulation" position.

This bipartisan consensus suggests that AI governance need not be a partisan issue. Voters of all political stripes want humans in charge, children protected, and companies held accountable.

Overall: Voters reject the "race to replace" approach to AI

When presented with two overarching visions for AI development, voters chose decisively.

This 8-to-1 margin represents a clear public mandate: Americans want guardrails on AI development, not a race to the bottom.

And what’s more:

- 89% of Harris voters chose the pro-human-control vision (Statement A);

- 73% of Trump voters chose the pro-human-control vision (Statement A), and of those only 14% preferred the fast, lightly-regulated approach.

On the core question of human control versus fast development, both Democrats and Republicans overwhelmingly chose human control. The same pattern holds across all the specific principles tested.

The finding sits in stark contrast to the operating paradigm of leading AI companies, which openly declare they are racing toward increasingly powerful systems while lobbying against meaningful oversight.

Human control: Non-negotiable for most Americans

The survey tested voter attitudes on several dimensions of human control over AI systems. The results show overwhelming consensus.

On the core question of human control:

- 77% agree that AI must stay under human control, with people deciding what to delegate and retaining the ability to understand what systems are doing and stop them when needed. Just 11% preferred giving AI more independence to enable speed and scale.

On specific control mechanisms:

- 83% agree that powerful AI systems must have mechanisms allowing human operators to promptly shut them down.

- 85% agree that humans should have the authority and capacity to understand, guide, limit, and override AI systems.

- 76% agree that AI systems must not be designed to self-replicate, autonomously self-improve, resist shutdown, or control weapons of mass destruction.

On superintelligence:

- 69% agree that development of superintelligence should be prohibited until there is broad scientific consensus that it can be done safely and controllably, with strong public support. Just 9% disagree.

This finding echoes earlier polling showing Americans want regulation or prohibition of superhuman AI systems. The public is not persuaded by industry claims that such systems are inevitable or that racing to build them is wise.

Protecting children and relationships from AI replacement and manipulation

The survey revealed strong support for protecting what might be called the "human experience" from AI encroachment—particularly when it comes to children.

On AI and children:

- 77% agree that companies must not be allowed to exploit children or undermine their wellbeing through AI interactions that create emotional attachment or leverage.

- 76% agree that AI companies should not be allowed to stunt children's physical, mental, or social growth or deprive them of essential developmental experiences.

On AI and relationships:

- 73% chose the view that AI should not replace meaningful relationships (family, friends, faith, community) and that children deserve extra protection—versus 15% who felt AI should be allowed to serve as tutors, coaches, or companions if users want.

- 72% agree that AI should not supplant the foundational relationships that give life meaning.

On safety testing and transparency:

- 74% agree that AI chatbots should undergo pre-deployment safety testing for risks such as increased suicidal ideation, worsening mental health, and other known harms—similar to how drugs are tested.

- 77% agree that AI-generated content that could be mistaken for human content must be clearly labeled.

- 78% agree that AI should clearly identify itself as artificial and not claim experiences it does not have.

- 74% agree that AI systems should not cause addiction or compulsive use through manipulation, sycophantic validation, or attachment formation.

These findings suggest the public sees AI's potential to manipulate vulnerable users—especially children—as a serious concern requiring regulatory action, and that a large majority do not want authentic human experiences replaced by AI.

Accountability: The public wants consequences

Voters strongly favor holding AI companies legally accountable for harms caused by their systems. They reject the idea that AI can serve as a shield against liability.

On corporate accountability:

- 72% chose the view that AI builders must be responsible for harms, with clear safety standards and honest reporting—versus 15% who preferred focusing accountability only on "real negligence and fraud" with light oversight.

- 71% agree that AI must not act as a liability shield that prevents those deploying it from being legally responsible.

- 73% agree that developers and deployers should bear legal liability for defects, misrepresentation of capabilities, and inadequate safety controls.

- 77% agree that if an AI system causes harm, it should be possible to determine why and who is responsible.

On oversight and standards:

- 72% agree that AI development should be governed by independent safety standards and rigorous oversight.

- 77% agree that highly autonomous AI systems should require pre-development review and independent oversight with genuine authority—not just industry self-regulation.

- 72% agree that AI companies must not be allowed undue influence over the rules that govern them.

On criminal penalties:

- 77% agree there should be criminal penalties for executives responsible for prohibited child-targeted AI systems or ones causing catastrophic harm.

On honest representations:

- 83% agree that AI companies must provide clear, accurate, and honest representations of their systems' capabilities and limitations.

The message is clear: the public expects AI companies to be held to high standards, with real consequences for failures.

Conclusion

This survey reveals a significant gap between public preferences for a pro-human future and the current trajectory of AI development.

Leading AI companies have declared they are racing toward artificial general intelligence, while many actively work to prevent meaningful oversight. The public, by contrast, wants humans firmly in control, with strong guardrails, independent oversight, and real accountability.

The findings represent a clear mandate for policymakers: Americans want AI that serves people, not the other way around. They want development that is careful and accountable, not fast and reckless. And they want rules with teeth, not industry self-regulation.

The Pro-Human Declaration principles tested in this survey reflect these values. As AI systems grow more powerful, the question is whether governance will catch up to public expectations—or whether the gap between what Americans want and what they are being given will continue to widen.

Organizations

Individual endorsers

Yoshua Bengio Professor, Université de Montréal, Turing Award Laureate

Steve Bannon Fmr Executive Chairman of Breitbart News; fmr chief strategist to President Donald Trump; Host of War Room podcast

Susan Rice Fmr U.S. National Security Advisor & Policy Advisor for President Obama; U.S. Ambassador to the United Nations; Rhodes Scholar

Glenn Beck Founder of Blaze media, radio host, TV personality, political commentator

Alan Minsky Progressive Democrats of America (PDA)

Ryan T. Anderson President, The Ethics and Public Policy Center

Walter Kim President, National Association of Evangelicals, board member, Christianity Today

Ralph Nader Consumer Advocate, Center for Study of Responsive Law, Presidential candidate

Daron Acemoğlu Nobel Laureate in Economics, MIT Institute Professor

Beatrice Fihn Nobel Peace Laureate, Founder of Lex International

Rev. Johnnie Moore, PhD President, The Congress of Christian Leaders

Margarita Louis Dreyfus Human Change Foundation, Owner and chair of the Louis Dreyfus Company group, founder of Human Change Foundation

Sir Richard Branson Founder, Virgin Group

Randi Weingarten President, American Federation of Teachers

Julianna Arnold Founding Member and Executive Director, Parents RISE!

Megan Garcia Blessed Mother Family Foundation

Joann Bogard Parents SOS

Michael Toscano Director, Family First Technology Initiative, Senior Fellow, Institute for Family Studies

Audrey Tang Cyber Ambassador, Taiwan

Mike Kubzansky CEO, Omidyar Network, Professor of Computational Engineering, Rice University, Member: US National Academy of Engineering and National Academy of Sciences

Tomicah Tillemann President, Project Liberty Institute

Stuart Russell Professor of Computer Science, Berkeley, Director of the Center for Human-Compatible Artificial Intelligence (CHAI); Co-author of the standard textbook 'Artificial Intelligence: a Modern Approach'

Tristan Harris Co-Founder, Center for Humane Technology

Brendan Steinhauser CEO, The Alliance for Secure AI

Dawn Nakagawa President, Berggruen Institute

Mikhail Samin Executive director, AI Governance and Safety Institute

Kelly Monroe Kullberg General Secretary, American Association of Evangelicals (AAE)

Jeffrey Bennett General Counsel, SAG-AFTRA

Joseph-Gordon Levitt Actor, Filmmaker, Founder, HITRECORD

Alyson Stoner Actress, dancer, and singer, SAG-AFTRA, known for Step Up, Camp Rock, and voicing Isabella in Phineas and Ferb.

Frances Fisher Actress, SAG-AFTRA, known for Titanic, Unforgiven, and Watchmen.

Anthony Aguirre Future of Life Institute

Max Tegmark Future of Life Institute

Clark Barrett Professor of Computer Science, Stanford

Moshe Vardi Professor of Computational Engineering, Rice University, Member: US National Academy of Engineering and National Academy of Sciences

David Autor Professor, Co-director, Stone Center on Inequality and Shaping the Future of Work, MIT Department of Economics,

Meredith Whittaker President, Signal Foundation

Emilia Javorsky Future of Life Institute

Jean Oelwang Founding CEO, Virgin Unite and Planetary Guardians

Andrea Miotti Founder and CEO, ControlAI

Marc Rotenberg Founder, Center for AI and Digital Policy

Malo Bourgon Machine Intelligence Research Institute

Michael Marinaccio Executive Director, Center for Responsible Technology

Dylan Hadfield-Menell Associate Professor of Computer Science, MIT

Sharon Li Associate Professor of Computer Science, University of Wisconsin Madison

Vael Gates Humans in Control

Deger Turan Metaculus

Ed Newton-Rex CEO, Fairly Trained

Alison Rice Managing Director, Design It For Us

Brooke Istook Chief Impact Officer, Heat Initiative

Medlir Mema Founder and Director, AI Ethics and Governance Institute; Senior Fellow, Organized Intelligence

Vivian Dong Programs Director, Legal Advocates for Safe Science and Technology

Tegan Maharaj Assistant Professor in Machine Learning, Mila

David Krueger CEO, Evitable; Assistant Professor, University of Montreal, Mila

Roman Yampolskiy Professor, Computer Science and Engineering. Author, AI: Unexplainable, Unpredictable, Uncontrollable, UofL

Walter Scheirer Professor, Department of Computer Science and Engineering, University of Notre Dame

Jillian Clare LA Board Member, Chair National Young Performer’s Committee, SAG-AFTRA

Nick Smoke Actor, SAG-AFTRA, known for "The Social Network"

Karen A. Brown Filmmaker/Actor, SAG-AFTRA/StardustBlue Media

Jesse Martinez Carlos Board Member, Los Angeles Local, SAG-AFTRA

Erik Passoja Co-Chair, LA New Technology Committee (2024-2025), SAG-AFTRA

Peggy Lane ORourke Actress, known from Seinfeld; SAG AFTRA Los Angeles Local Board Member, National Board Alternate

Rob Drake Out There Pictures

Joshua Hughes Greater Grace Christian Center

Cristine Legare UT Austin

Mark Brakel Future of Life Institute

Joe Allen Humans First

Alexandra Tsalidis Future of Life Institute

DZ Kalman Shalom Hartman Institute

David Hsu Senior Director of Programs and Policy, Omidyar Network

Bobby Halick Hit Record

Justin Bullock Americans for Responsible Innovation

Oliver Stephenson Federation of American Scientists

Daniel Bring American Affairs

Michael Kleinman Future of Life Institute

Prof. Sandra M Faber Prof. Emerita, University of California, Santa Cruz

Kate McCarthy Women's Media Center

William Jones Future of Life Institute

Juliana Arnold Parent RISE!

Colin McGlynn Demand Progress Education Fund

Rabbi Geoff Mitelman Sinai and Synapses

John Unger FAITH Alliance—Fellowship Advancing Integrity in Technology & Humanity

David Haussler Professor, UC Santa Cruz

Beatrice Ekers Foresight Institute

Evan Davison Kotler Helena

Ari Rosenthal Torchbearer Community

Connor Leahy ControlAI US

Fr. Michael Baggot Associate Professor of Bioethics, Pontifical Athenaeum Regina Apostolorum

Sugheanmungol Sarin AI Safety Asia

Isabella Hampton Future of Life Institute

Joshua Tan Public AI

Jeremy Ornstein Center for AI Safety

Emma Ruby Sachs Eko

Philip Reiner Institute for Security and Technology

Sam Hiner Young People's Alliance

Lachlan Carroll Center for AI Safety

Riki Parikh Alliance for Secure AI

Christian F. Nunes President, Saving Ourselves Foundation Inc.

Brian Boyd Future of Life Institute

Chase Hardin Future of Life Institute

Dalia Hashad Future of Life Institute

Saheb Gulati Center for AI Safety

Lucas Hansen CivAI

Marianna Richardson G20 Interfaith Forum Association

Sacha Hayworth Tech Oversight Project

Shana Mansbach Fathom

John McElligott Servitium AI and Serviti Corp

Holly Elmore Pause AI

Sander Volten Seismic Foundation

Jaron Lanier Computer Scientist, Author

Valerie M. Hudson University Distinguished Professor, Texas A&M University (and the Aegix Institute)

Maurine Molak Founder, David’s Legacy Foundation

Ron Ivey Founder and CEO, Noēsis Collaborative

Kirk Doran, Associate Professor of Economics Faculty, University of Notre Dame

Maria S. Eitel Founder, Plan A

Geoffrey Miller Associate Professor of Psychology, University of New Mexico

Camille Crittenden Executive Director, CITRIS and the Banatao Institute, University of California

Joseph Vukov Associate Professor of Philosophy, Loyola University Chicago

David Evan Harris Chancellor's Public Scholar, University of California, Berkeley

Zachary Davis Co-Founder, Faith Matters

Seán Coughlan Director, To Zero

Andrew Broz AI Research & Strategy, Civilization Research Institute

David Brenner Co-Founder and Board Chair Emeritus, AI and Faith

Emilia Ismael Head of Communications & Operations, To Zero

Miki Yamashita Actor, SAG-AFTRA

Heather-Ashley Boyer Actor, Los Angeles Local Board Member, SAG-AFTRA

Anamitra Deb SVP, Programs and Policy, Omidyar Network

Catherine Bracy CEO & Founder, Tech Equity

Marie Fink Stunt Coordinator, SAG-AFTRA, Los Angeles National Board Member

Wes McEnany Future of Life Institute

Konstantine Anthony Councilmember, City of Burbank

Taylor Jones Design & Web Manager, Future of Life Institute

Stephen Casper AI researcher, Massachusetts Institute of Technology

Kelly Kullberg American Association of Evangelicals, Veritas Forum

Ben Cumming Director of Communications, Future of Life Institute

Tristan Zucker Head of Operations, Humans First

Nancy Green Saraisky Executive Director, Ethical Tech Project

Anna Yelizarova Special Projects Lead, Future of Life Institute

John Richard President, Essential Information

Clare Morell Fellow, The Ethics and Public Policy Center

Lisa Gilbert Co-President, Public Citizen

Robert Weissman Co-President, Public Citizen

Vivian Dong Programs Director, Legal Advocates for Safe Science and Technology

Teri Olle Vice President, Economic Security Project

Brendan Bradley

Michelle Margolis Librarian, Columbia University

Colin McGlynn AI Policy Advisor, Demand Progress Education Fund

Sneha Revanur Founder & President, Encode

Heather Booth Organizer

Robert Creamer Partner, Democracy Partners

Brittney Gallagher Co-Founder, AI Objectives Institute

Rania Batrice Strategist, Founder and President, Batrice and Associates

Leah Seligmann CEO, The B Team

Hon Jeff Denham US Representative- CA 10 (2011-2019)

Andrew Trask Executive Director, OpenMined

Lawrence Lessig Roy L. Furman Professor of Law and Leadership, Harvard University

Xiaohu Zhu Founder, Center for Safe AGI

John Sherman Executive Director, GuardRailNow/The AI Risk Network

Caleb Motupalli CEO, Agape Kingdom

Dr Luke McNally Senior Lecturer, University of Edinburgh

Mark Gubrud, PhD

Felix De Simone Organizing Director, PauseAI US

Kevin Lotto AI Existential Risk Advocate, Torchbearer Community

Wesley H. Holliday Professor of Philosophy, University of California, Berkeley

Roger Kirkness CEO, Convictional, Inc.

Michael Huang Co-Director, PauseAI Australia

Maggie Munro Communications Strategist, Future of Life Institute

Lindsay Langenhoven Freelance responsible tech writer

Geoffrey Martin Odlum President, Odlum Global Strategies

Adam McCabe Head of Research, Convictional

Erik Otto Managing Director, Ethagi Inc.

Anna Hehir Head of Military AI Governance, Future of Life Institute

Siliconversations AI Safety YouTuber

Audrey Mocle Executive Director, Open MIC (Open Media and Information Companies Initiative)

Mark Jerusalem Researcher in international AI strategy, Independent

Gabriele Sarti Postdoctoral Researcher, Norhteastern University

Brett Cooper Fundraising Copywriter, JB Cooper LLC

Yeshodhara Baskaran Founder, ZuGrama

Guru Medasani Founder & CEO, Ridy

Shadi Bartsch Professor, Forum for Integrated Research

Louise Graham FRSA Founder, The Unwritten Future

Prof. Piotr Sankowski Director, IDEAS Research Institute

dr. Jeroen Franse AI Governance Advisor, AI Point EU

Patrick Hoang Student, Texas A&M University

Sun Gyoo Kang Founder, Law & Ethics in Tech

Carlos Javier Regazzoni Director, Committee in Global Health and Human Security, Argentine Council on Foreign Relations (CARI)

Jimena Sofia Viveros Alvarez President, The HumAIne Foundation

Ewan Morrison Author

Ori Nagel Producer, Doom Debates

Marc Barnes Editor, New Polity Magazine

Daniel Koch Assistant Professor, University of Manitoba, Canada

Andrea Fiegl Senior Policy Director, Media and Technology, Common Cause

Anderson Rocha Tavares Professor, Universidade Federal do Rio Grande do Sul

Hunter Lees Graduate Student, Lipscomb University

Dr. Matthew Chequers Applied AI R&D, Convictional

Samuel Thibault Professor, University of Bordeaux

Joshua Cohen Distinguished Senior Fellow in Law, Philosophy, and Political Science, University of California, Berkeley

Ariartry Founder, The Altern Research Collective for Brains, Minds & Machines

Mikita Belahlazau Software engineer, Waymo

Dr. Lucas Mello Schnorr Associate Professor, UFRGS

Lauren Mecca Founder, Tech/ish

Amber Scorah Cofounder, Author, Psst.org

Amir Banifatemi Co-Founder, AI Commons

Álvaro Guglielmin Becker Student, UFRGS

Marcelo Soares Pimenta Full professor, UFRGS

Félix Pharand-Deschênes Director, Globaïa

Joshua Anderson Founder, Cantaloupe AI

David Wood Chair, London Futurists

Jeannie Joshi Brand Innovation

Ayoze García González National leader, Spain, PauseAI

Ron Bodkin CEO CEO, ChainML, Inc.

Iago Tonello Student, Federal University of Rio Grande do Sul

Glen Webster Board Director, Meridian Cambridge

Vicente Veiga Student, UFRGS

Yixiong Hao Co-director, AI Safety Initiative at Georgia Tech

Rodrigo Cavalcanti AI Governance Consultant, Analogik

Mustafa Saeed Co-Founder & CEO, Luella

Manuel M. Oliveira, PhD Full Professor of Computer Science, UFRGS

Simon Skade Co-Lead, PauseAI Germany

Alon Torres AI Safety Advocate, Torchbearer Community, International Association for Safe & Ethical AI

Joel Luís Carbonera, PhD Professor, Federal University of Rio Grande do Sul (UFRGS)

Benjamin Dreyfus Instructional Professor of Physics, George Mason University

Rafael Rost Sofware Engineer, SAP

Nivedita Sharma Business Management Consultant

Risto Uuk Visiting Researcher, London School of Economics

Doctor Caleb Bernacchio Legendre-Soulé Chair in Business Ethics, Associate Professor of Management & Faculty Director of the Center for Ethics and Economic Justice at Loyola University New Orleans, Loyola University New Orleans

Timothy B. Elder, PhD Research Associate, The Dartmouth Institute, Geisel School of Medicine, Dartmouth College

Akbar Tri Laksana Founder, Athena© Organization Labs

Jackie Hart CEO, Exponential Infrastructure for Coordination

Sophia Ward People Services

Dr. Robert Muggah Co-founder, Igarape Institute

Fredy Enrique Mena Andrade CTO, Simuliz Interactive S.A.S

Nathan Metzger Volunteer and Board Member, PauseAI US

Daniel Gerritzen Science writer, author, Forschungsnetzwerk Extraterrestrische Intelligenz (Extraterrestrial Intelligence Research Network)

Michael Walker Author

Rufus Pollock CEO, Life Itself

Dan Lipworth Founder, True North Tutors

Heather-Ashley Boyer Actor, Los Angeles Local Board Member, SAG-AFTRA

Mr. Bennett Iorio President, Geopolitical Insight and Education Foundation

Ilia Grom Member of the Administrative Council, Art-Labyrinth

Rafael Gold Founding Partner, The Israeli Association for Ethics in AI

Pedro Javier Martos Velasco Principal Engineer, The Workshop

Izolda Dr. Takacs International lawyer, Norwegian Centre for Human Right

Saimir Baci Engineer CTO, Co-Founder, Augmentifai

Lionel Levine Professor of Mathematics, Cornell University

Professor Albert Ossó Castillon Professor, University of Graz (Austria)

Leonardo Holtz de Oliveira AI Researcher, SENAI Institute of Innovation

Grace Roberts

Dr Tony Carden Adjunct Researcher, University of the Sunshine Coast

Faisal S. AI Audit, AuditPartners

Daniel Yokomizo Human Being, Humanity

William Louth CTO, Humainary

Steven Adger Sys Admin/Web Dev, Independent Contractor

Kyle Brockley Founder, BrockboxLLC

Vanessa Chester SAG-AFTRA LA Local Board Member, Actress most known for The Lost World: Jurassic Park, Harriet the Spy

Ícaro Neri Pereira de Souza Researcher/Geographer, UFMG/CEFET-MG

John Weldon Clinical A.I. Director, Deciphex

Shane Cannon Psychotherapist

Benjamin Shimabukuro Cogito Systems Engineer, Epic

Nathan Hammond Founder, The Fellowship of Sovereign Consciousness

Hocine Sahnoun Volonteer, Teacher

Yaniv Ben Zriham VP R&D, Equashield

Mr Matthew Kilkenny Founder, Ethicalainow.org

Paul Dos Santos Group Head of Information Security, SG Fleet

Jon Salisbury CEO, Nexigen

Mr. Vlad Suheschi Photographer, sportphotoevents.com

Edson Prestes Full Professor, Federal University of Rio Grande do Sul

Santiago J. Isbert Perlender CEO - Author, CINME

Andrew Arkins

Kevin Russi Senior Partner, RUSSI + PARTNER

Cristóbal Rodríguez-Montoya Professor, Pontificia Universidad Católica Madre y Maestra

Christopher Wright CEO, The AI Trust Council

Clay Jackson

Jonathan Murphy

Ian Bassin Co Founder and Executive Director, Protect Democracy

Max Winga Policy Analyst, ControlAI

Roger Abbiss Founder, Occupy Earth

Marc Carter Chief, IT Business Management Division, US Army

Cyrus Hodes Founder, AI Safety Connect

Michael R Scheessele Professor Emeritus of Computer Science and Psychology, Indiana University South Bend

Steven Damian Imparl Independent scholar, lawyer, AI engineer, and philosopher, Steven Damian Imparl

Anthony Bailey Software developer, Pause AI

Michael R Scheessele Professor Emeritus of Computer Science and Psychology, Indiana University South Bend

Dmytro Hrybach Product Manager, Business

SAAKSHAR DUGGAL AI law Expert, AI Law Hub

Ionut Vasile Cyber Security Consultant, Independent Consultant

Luke Housego Board Member, Decentralised Human Architecture Foundation

Mohamed ElBendary AI Governance Researcher, Independent

Alexander McCoy Senior Movement Staff, Humans First

Bill Crandall Artist and Arts Educator, Viaduct Arts

Dr. George Tilesch President, PHI INSTITUTE for Augmented Intelligence

Mridutpal Bhattacharyya Chief Policy Advisor, Indian Society of Artificial Intelligence and Law

Abhivardhan Founder, Indic Pacific

Lucas Chu Founding Architect, HF0

Gordon Seidoh Worley Director of Research, Phenomenological AI Safety Research Institute

Barbara Anna Zielonka Educator, Nannestad vgs

Saeed K. Al Dhaheri Director, Center of Futures Studies, University of Dubai

Paul Prinsloo Professor Extraordinaire, University of South Africa (Unisa)

Sophia Steen Analyst, Alignmint

Nikita Yampolski Co-Founder, CTO & CPO, One Mental Hub

Simon Bramwell Facilitator, X-AEGIS

Adrian Brown Chief Executive, Windfall Trust

Olaf Witkowski Director, Cross Labs

Karl Markus Villemson Grant Funding and Project Manager, H2Electro

Joe Cozens Teacher of AI & Transformation, University of Oxford

Henry Ward AI Engineer, Freelance

Aleksandr Popov

Dr Hadyn Williams Fmr CEO British Association for Counselling & Psychotherapy, Executive Consultant

Christos Theodoropoulos AI Solutions Architect, Qity

Cristiano De Mei Writer and AI expert, Fideuram

Laura Maria Kull Co-Director, Effective Altruism Estonia

James Wilson Global AI Ethicist, Capgemini

Alvaro Sanchez, PhD Physicist and AI safety researcher, Independent Researcher

Tara Steele Director, The Safe AI for Children Alliance

Erik Arnberg CEO, Vocitas AB

Raja Chatila Professor Emeritus, Sorbonne University, Paris

Roberto Cerina Assistant Professor in Social and Humane AI, Institute for Logic, Language and Computation, University of Amsterdam

Pauline Delorme UX Strategist, Softeam

Roberto Cerina Assistant Professor in Social and Humane AI, Institute for Logic, Language and Computation, University of Amsterdam

Dr Michael Strange Researcher, Malmö University

Izolda Dr. Takacs International lawyer, Norwegian Centre for Human Right

Jochen Dullenkopf CEO, RevenueWings - Online Marketing Solutions Inc.

David Manheim Director of Policy and Research, Alter.org.il

Roland Millare, STD Vice President of Curriculum, Director of Clergy Initiatives, St. John Paul II Foundation

Corinne Thomas AI Commercial Adoption & Governance, Ethical Sales Ltd

Irina Monica Buruiană Systemic Designer and Psychologist, Ultreiacamino

Sheila Pinheiro Nainkin Head of Fractional Fraud and Risk, Pinheirorisk.com

Rafael Gold Founding Partner, The Israeli Association for Ethics in Artificial Intelligence

Ilia Grom Member of the Administrative Council, Art-Labyrinth

Erich Mische CEO, SAVE-Suicide Awareness Voices of Education

Alain Létourneau Professor, practical philosophy, Université de Sherbrooke

Max Blair Associate Principal Oboe, Pittsburgh Symphony

Ryan Davis Founder & CEO, People First

Raluca Spataru PauseAI Romania Country Lead, Lawyer, PhD Student, PauseAI

Peter A. Jensen CEO, SAFE AI Forever Inc.

Elena Gallina Artist, E Gallina Art

Laurens Martens Senior Art Director, AKQA

Ayoub Ibnoulfassih Journalist, L'angle

Philip Trippenbach Strategy Director, Seismic Foundation

Martin Quinson Professor, ENS Rennes

Dirk Friedrich Founder, Five Intelligences Alliance

Tomas Prajzler CEO, Talentor CZ

Rufo Guerreschi President, Coalition for a Baruch Plan for AI

David Sachs Assistant Professor, Icahn School of Medicine at Mount Sinai

Octavian M. Machidon Assistant professor, University of Ljubljana, Faculty of Computer and Information Science

Daniel Girshovich Human, TFH

Julia da Rocha Junqueira PHD Student, UFRGS

Bernhard Braun Scientific Associate, Federal Highway and Transport Research Institute Germany

Remmelt Ellen Coordinator, AI Safety Camp

Rolland Lucas Owner / Business Analyst, BrixTec Web Soutions

Luka Stevanovic Software Engineer, Connect The Dots

Kaarel Hänni AI Safety Researcher, Independent

Lucas E. Wall CEO & Founder, Almma.AI

Dino Lortie

Steven Potaczek Professor of Commercial Music, Samford University

Gordon Montgomery Researcher, Sofia.edu

Ann Bender Dalhäll Owner, Northbound group

Jordan Panayotov Founder, Creator of The Panayotov Matrix, superior tool which ensures that AI systems are truly Human-Centric, fully inline with The Pro-Human Declaration, Independent Centre for Analysis & Research of Economies

Svilen Kondakov Substitute Teacher

Ariel Agor Operational Ontologist, Agor AI Advisory

Dr. Martin Dinov CTO, Maaind/Lifefire

Piotr Sankowski Director, IDEAS Research Institute

Jennifer Gibson Executive Director, Psst.org

Joseph Gelfer Organiser, Our Fair Future

James Norris Founder & Executive Director, Center for Existential Safety

Marie Lotto

Gabriel Barbosa Taffarel Undergraduated, UFRGS

Dr Michael Hall Senior Lecturer in Education, University of Winchester

Dr. Lovkush Agarwal Research Manager, MATS

Gianluca Bontempi Professor of Machine Learning, Université Libre de Bruxelles

Jaedon Satchell AI Developer, Cognizant

Tobias Fritz Assistant Professor, University of Innsbruck

Jeremiah Wesley Templeman Harding Postgraduate, London School of Economics and Political Science

Peter Joyce MD & CAIO, Grand Challenge AI

José González Artist, José González

Chris Scammell CEO, Buddhism & AI Initiative

Torbjörn Lundh Professor, Chalmers University of Technology

Steven Engler Professor of Religious Studies, Mount Royal University

Kyle Kahl Healer

Rabbi Daniel Levin Senior Rabbi, Temple Beth El of Boca Raton

David S Snyder, M.D. Retired Physician, City of Hope Cancer Center

Alan Sousie

Patricia Palmer Retired legal marketing director

Elena Chirila Commercial Director, B+N Integrated Facility Services

Anderson Maciel Professor, UFRGS

Mark Andrew Kordic Podcast Host, Advancing Your Vision

Janet Frances Howard

Jeff Cooper Executive Director, Approachable Intelligence Research Foundation

Joshua Kauffman Founder, Wisdom VC

MaryAnn Derrick-Green Owner, Studiomadtulsa

Joan Williamson-Kelly

José Jaime Villalobos Ruiz Multilateral Governance Lead, Future of Life Institute

David Markowitz Psychologist

Nate Hake Founder, Travel Lemming

John Mason

Japhet Student, Centre for Alternative Technology

Karl von Wendt Writer, Independent

Armando di Matteo Researcher, Istituto Nazionale di Fisica Nucleare, Sezione di Torino

Naiyarah Hussain Co-founder, AI Safety UAE

Jovin Cronin-Wilesmith Partner, Celium Group

Alexandre Ngau AI Strategy Consultant, Sia

Emily Boddy Co-Lead, Smartphone Free Childhood US

Mateusz Bagiński Researcher, AFFINE

Rabbi Josh Feigelson President & CEO, Institute for Jewish Spirituality

Antonis Polykratis Software Engineer

Andrew Shanahan Editor, Future of Life Institute

Faheem Salman Yunus CTO & Co founder, Nuvint Dynamics

Olin Thakur Co-Founder, Techplomacy Foundation

Vicente Pinto Citizen, TorchBearer Comunnity

Harish Mehta Chairman, Onward

Lesaun Harvey Founder, Technological Liberty, inc

Krystal Jackson Non-Resident Research Fellow, UC Berkeley, Center for Long-Term Cybersecurity

Robert Rand Assistant Professor of Computer Science, University of Chicago

Dr. Joseph Thornton Candidate, Thornton for Florida House District 10

Allan Brooks Community Manager, The Human Line Project

Shantanu Ghosh, Ph.D. Professor, Amity University

Sreejith Sreedharan Author - Future of Work, Future of Work AI Labs

Paul Hebert Founder, AI Recovery Collective

Adrian A CTO, PT. Ksatria Mitra S

Rabbi Neal Gold Founder and Director, A Tree with Roots

Pedro Ávila Lecturer, Columbia University

Aiden Kim Researcher, Center for AI Safety

William Baird UK Board Member, PauseAI

Jose Cesar ‘Zeca’ Martins Leader, Derrubando Muros

Avinash Tax Consultant, KPMG

Sari Katariina Riippi AI engineer, Freelance

Charbel-Raphael Segerie Executive Director, CeSIA - French Center for AI Safety

Tunde Aideyan, PhD Instructor, Northeastern University

JB Herrera Founder/CEO, SynergiAI

Brendan O’Donoghue Managing Director, Artificial Observer Limited

Aidar Toktargazin Student, Nazarbayev University

Matteo Mastracci Senior Legal Officer, Italian Ministry of Education and Merit

Dr. Luiza Jarovsky CEO, AI, Tech & Privacy Academy

Xavier de Souza Briggs Senior Fellow, The Brookings Institution

Jake Hirsch-Allen Public AI Fellow and Director Partnerships and Advocacy, The Dais

Karine Landry Teacher, Cégep de Valleyfield

Matthew Prewitt President, RadicalxChange Foundation

Karl Berzins President, FAR.AI

Timothy O’Donnell Professor, McGill University

Dr. Sian Tsuei Clinical Assistant Professor, UBC

Samuel Robson Student, UC Berkeley

Rabbi David Nesson Rabbi Emeritus, Morristown Jewish Center

Julie Duvall MS Education - Resigned Teacher, Washoe County School District

Egg Syntax AI Safety Researcher, Independent

Dr. Ken Seidenman Senior Associate (patent attorney), FB Rice

Grigory Reznikov Graduate Student, Utrecht University

Joel Christoph Founder and Director, 10Billion

Martin Chang HPC engineer, Nekko

Ana Paula Castillo Rodriguez Co-director, AI Safety Initiative Amsterdam

Baran Peters AI Researcher & Founder, OpenDemocracy.AI

Nathan Thoma, PhD Clinical Associate Professor of Psychology, Weill Cornell Medical College

Maelle Andre Filmmaker

Tianhao Chen Founder, BEIJING KANGGAO LAW FIRM

Michael Garrett citizen, SUNY Downstate Medical Center, Brooklyn, NY

Daniel Lupión-Lozano Secretary, PauseAI Spain

Shrey AI Solution Engineer, DigiMantra

Said Achmiz ,

Pedro Javier Martos Velasco Principal Engineer, The Workshop

David Markowitz Psychologist

Benjamin Olsen Founder & CEO, interintelligence

Adrians Skapars AI Safety Researcher, University of Manchester

Uwohali Tuccio Founder, Permaculture DAO

Dominic Ferro, M.D. Psychiatrist, Sole practitioner

Wendy Jacobson, M.D. Psychiatrist & Psychoanalyst, Emory University School of Medicine, American Psychoanalytic Association

Geoff Goodman, Ph.D. Professor of Psychiatry and Behavioral Sciences; Associate Professor of Psychology and Spiritual Care, Emory University

Dr. Todd Essig Founder and Chair, APsA Commission on Artificial intelligence

Peter Woodford Director, peterwoodford.com

Taylor Vignali Product Designer, Consultant

Billy McConnell Musician etc, Acid Ranger LLC

Dario Melpignano CEO, Adara

Alin Visan IT Support Engineer, Basware

Phil Salesses Founder & CEO, Move AI

Farzal Dojki Founder, DotZero DMCC

Polina Novoselova Data Scientist, Sber

Jason LaScola Field CTO / Senior Dnterprise Advhitext, ServiceNow

Amanda Heenan Artist, Art for Healing

Brian Pedersen CTO, Proem

Alexandr Khlyntsov Human right activist, Ili

Olena Marchenko Senior Manager, BMS

Chris Connor Founder, Relinvent

William Mullin Producer / President, Significant Media & Music

David Cosel Software Engineer, Happy Path Apps, LLC

Naiyarah Hussain Co-founder, AI Safety UAE

Samuel Allen Owner, Rebelyell Enterprises, LLC

Timothy Kang Commissioner, Middle States Association Commissions on Elementary and Secondary Schools

Gerd Leonhard CEO, The Futures Agency

Henrique Becker University Teacher (on Probationary Period), Universidade Federal do Rio Grande do Sul

Nader Ghazal Chairman, African-Asian Council for AI and Cybersecurity

Roy Cornelissen Consultant and solution architect, Xebia

Kyle K Concerned Private Citizen

Jasmine Bhatia Senior Lecturer, Birkbeck, University of London

Charbel-Raphael Segerie Executive Director, CeSIA - French Center for AI Safety

Samuel Shapero Principal Research Engineer, Georgia Institute of Technology

Matt Gaskell Director, Velogic Pty Ltd

Ian Ramsay Director, Flowstate

Gautham Vijayasankar Raja Founder, Tham Collective

Jake Hirsch-Allen Public AI Fellow and Director Partnerships and Advocacy, The Dais

Edson Prestes Full Professor, Federal University of Rio Grande do Sul

Tammy Richmond MS, OTR/L, FAOTA, FATA CEO/Pres, Go2Care, A Telerehab Company

Gautham Vijayasankar Raja Vice President, SAATH (South Asian Arts and Theater House)

Yomal Indula Perera Senior Software Engineer, WSO2 LLC

Ernest Nkunzimana AI/ML/IoT researcher, University of Rwanda, ACEIoT

Renee Moodie Founder, Safe Hands

Luigi Pedace Founder & CEO, Convergent

Prashant Bhardwaj Innovation Manager, Crif

Armando di Matteo Researcher, Istituto Nazionale di Fisica Nucleare, Sezione di Torino

Nicolas Miailhe Co-founder, AI Safety Connect

Morgan Nyman Enterprise Architect, Coromatic

David Frühbuß AI Researcher, Max-Planck Institute for Biochemistry

Damon Falck Research Fellow, MATS

Patrick NEYTS CEO, VECTRA International BV

Douglas Nascimento student, ufrgs

Michele Fiore Senior Designer, Oedipus Design

Mr. Andres Munoz, Jr. Director of Educational Technology, Clearwater Central Catholic High School

James Beston CEO, Big Pixel Consulting Ltd.

Professor Alejo José G. Sison, PhD Professor, University of Navarra

Mike Ruddock Founder and CEO, Lithium Labs

Benjamin David Yetton Research Scientist, Google

Jeff Dobro, MD CEO, Eudaimonia Concepts

Rabbi Jeffrey Clopper clergy, Temple Beth El

Mauro Meanti Advisor, former Tech Industry Manager

Nick Fitz Founder & Managing Director, Juniper Ventures

Marc Cleiren AI & Education advisor - Founder Personal Development Centre FSW - Leiden University, Leiden University, The Netherlands

JB Herrera Founder/CEO, SynergiAI

Dr Michael Coulthart Senior scientist (retired), Government of Canada

Eric Kirsten Writer

Kareem Magdy Engineering Student, Cairo University

Michael Eriksen Healthcare Marketing

Kelvin Meeks Founder, International Technology Ventures, Inc.

Anwar El-Homsi CEO, Luminah.ai

Carol Houle CEO, Inspire Digital Consulting

Annette Hernandez Psychologist, Private Practice

Edson Prestes Full Professor, Federal University of Rio Grande do Sul

Ayhan Yükler President, cuBec, inc.

Andrew Jones Distribution, Sony Pictures

Helen Teplitskaia Founder & President, Global Alliance in Sustainability & AI

Sergio Abraham Founder & CEO, ExoMinds

Prof. Miguel Angel Aragon Calvo Researcher, UNAM

Dr Krste Pangovski Founder, Vision Intelligence

Marshall Herskovitz Writer/Producer/Director, The Bedford Falls Company

Gregory Conti Associate Professor of Politics, Princeton University

Dr Wellett Potter Senior Lecturer in Law, University of New England Australia

Carl Clinton Hillman Musician, Freelance

Carin Viscuso Owner/Designer, Carin Lynn Designs

Ranieri Sabatucci fmr Ambassador, European Union

Karina Alexanyan Founder/CEO, Positive Technology Institute

Michael Lambrellis Chief Technology Officer, Arc Of Life Pty Ltd

Alexandre Ngau AI Strategy Consultant, Sia

Shivaji Sondhi Wykeham Professor of Physics, University of Oxford

Rod Schneider Senior Consultant, Otic Group

David Timis Senior Fellow in AI Governance, Global Governance Institute

Zofia Dzik CEO/Founder, Humanites Institute-Human&Technology

Zofia Dzik CEO/Founder, CET - Center for Ethics in Technology

Prof. Dr. Kai Eckert Professor for Artificial Intelligence, Mannheim Technical University

Leon Sow Digitalmarking manager and AI researcher, Nitor

K. VijayRaghavan Former Principal Scientific Adviser to the Government of India, National Centre for Biological Sciences, TIFR

Jessica Galoff Business leader, Elayne James Salon

Alex Pascal Executive Director, Berkman Klein Center for Internet & Society, Harvard University

Igor Zdrnja Senior Software Engineer, Mediaocean

Boris Baracaldo Software Engineer, Meta

Sam Collett CIO, Eventus.do Limited

Lavakumar E Cofounder & CEO, Entropik Technologies

Stacy Gildenston Co-Founder, 3primitives.io

Jerry Harris Voice Actor, Voices By Jerry

Jess Wright Audiobook Narrator,

Alanna Stone Lead Software Engineer, Xebia Inc

Sara Morsey Actor, Audiobook Narrator, Professional Audiobook Narrators Association

Sr Nancy Usselmann, FSP Director, Pauline Media Studies

Denver Fletcher

David Frank Founder, Private Family Ventures LLC

Riccarda Zezza Chief Science Officer, Lifeed

Raymond E Cole III Voice Artist, freelance

Sab Kanaujia Co-Founder & CEO, Autonomic Health

Matheus Torsani Desenvolvedor de Software, Ramper

Michaela Prescott Founder, Moonlight

Marcelo Galotti Tavares de Castro Lawyer, Centro Universitário de Volta Redonda

Peter Suber Senior Advisor on Open Access, Harvard University

Meetali Jain Director, Tech Justice Law

Jonathan Yen Owner, Little Squirrel Studios

Sr Nancy Usselmann, FSP Director, Pauline Media Studies

Cynthia Field, PhD Psychoanalyst

Paola Gozzo Lawyer

Sharon Winkler Owner, socialmediaharms.org

Sumanth Vijayaraghavan Self employed

Timothy Smith CEO, On Your Marketing

Joseph Brown Owner, Right From the Start

Kevin Eagle Oliver Owner, KEO Music

Grant Tildsley Ecommerce manager, Minimax

Célio Kénosis Analyst Of System, SEDUC

Pedro Business Intelligence Intern, AgiBank

Luiz Gustavo Lucena Manager, Thomson Reuters

Szabolcs Emich President, Future Weavers Association

Ned Hayes, Founder CEO, Human Intelligence®

Crystal Wilding Travel Advisor Travel Agent, Travel By Crystal

Ari Schulman Editor, The New Atlantis

Gerard de Melo Professor, University of Potsdam

Rebecca Schaeffer Professional Organizer, Simplicity Design

Martin Kloeckner-Habicht Consultant for ethical Communication and Technology, Rapid Resonance Consulting

Dr David Barstow Commissioner, World Council of Churches

Sebastian Wieremiejczyk Heurystic designer

Dr. Jana Brown Chief Human Resources Officer, Business and Academic Researcher

Marc Bolick Founder, reshift

Trishala Nara Young India Fellow, Ashoka University

Erik Kemper UX Designer, Self

Alex U. Akkaya Founder & CEO, Neo Negotium

Laura Bercioux Giornalista, Napolart

Michael Chessey AI Designer / Creative Director / UI / UX, TheChessey

Edson Prestes Full Professor, Federal University of Rio Grande do Sul

Patricia Ruiz-Cantu Founder and President, RENACEUSA

Maurice Charles Taylor Professor Emeritus, University of Ottawa

Heather L Spotts Business owner, Licensee from AZ board of Cosmetology

Liana Gillooly Principle Liaison, Center for Humane Technology

Noah Weinberger AI Policy Advisor and Inclusion Advocate, Graduate Student

Jon Ralston Recorder, Arizona Green Party

Alicja Wolny-Dominiak Associate Professor, University of Economics in Katowice, Poland

Piotr Maczuga Founder, Fundacja Digital Creators

Waldemar W. Koczkodaj, PhD Professor

Sebastian Bahrinipour Founder and Managing Partner, witten.group Partnership

Prof. Włodzimierz Gogołek professor emeritus, University of Warsaw

Lisa M. Baker Executive Coach, Lisa B Simplified LLC

Michael Myers Partner, High Seas

Chris Clonts Senior editor, automotive technology, SAE International

Andrée Harvey Copresident, LaCogency.co

Joe Fennell Founder, AI Compatible

Hernan Silva Sosa Professor - Faculty of Engineering, Corporación Universitaria Minuto de Dios, Bogotá-Colombia

Chris Clonts Senior editor, automotive technology, SAE International

Brie Linkenhoker Founder and Principal, Worldview Studio

Rafael Henrique do Nascimento Oliveira AGI Cognitive Researcher, Safe Core

Shastina Free Pioneer in the Evolution of Consciousness, Graceful Spontaneous Evolution

Omar Farooq Senior Platform Architect, International Organization

Paul Brooks Founder/CEO, Quiddity Systems, Inc

Hasan Vedat Gurer Owner, Gurer law firm

Domenico Talia Professor, Università della Calabria

Josh Dray CISO, San Jacinto College

Keaton McNulty PhD Studnet, UTK

Tobias Utterhall Sound technician

Timothy O’Donnell Professor, McGill University

Oleksandr Hodovanets Student, University of Illinois Chicago

Noam Peleg Independent, Independent

John Nicolls Student, Queen's university

Fionn Kilfeather Student, Blackrock College

Daniel Broderick Student, Canyons District

Alex Santa Maria Legal Assistant, Burnett Law

Dremor Uni student, PTU

Stephen A. Wilson Web Developer, OpenText

Anthony Onesto, Founder Founder, Ella Adventures

Arkadiusz Florczak Advisor, Narodowy Bank Polski

Kristin Sanden Genetic Counselor, mother, citizen

Dr. Nathan Nichols VP of Product Management, Salesforce

Justin Estrada Film maker

Lucas Mejia Student

John Bennett Initiative Director, California Initiative for Technology and Democracy

Wilson Alejandro Beltran Quiñones Senior Systems Engineer, Independent

Anthony Onesto, Founder Founder, Ella Adventures

Benedek Koleszár Student, University of Oxford

Maximilian Wu Student, Christopher Newport University

Daniel Reeves CEO, Beeminder and AGI Friday

Arin Bhandari Software Lead, 2726 Red Pebble Rebels (FRC Team)

Poppy Payne Game Developer, Animator, Music Producer, MantisGirl

Philipp Weiß AI researcher, Fraunhofer FIT

Dr Petr Lebedev Science Communicator, SciencePetr

Shae Ammon Holme

Mark Rottke Member, FREIE WÄHLER

Antonio Mora Rives CTO, Trusted Humans Unipessoal LDA

Stian Fliid Graphic designer and illustrator, Freelance

Jan Prokes software developer, Accenture

Carlo Martinucci CEO, Unbubble News

Andrés Felipe Vargas Mariño Lecturer, Universidad Santo Tomás

Kintsugi Algernon Mayhem Associate Professor, UM Flint

Marc Musse AI Excellence Manager, Robert Bosch GmbH

Hinoki Taguchi Student

Mahe Sweeney Student, N/a

Kevin Lindsay Principal Software Engineer, Surge Solutions

Dina Capra

Joshua Alan Squibb RN, Houston Methodist Hospital System

Cooper Hewett-Marx

Marc Musse AI Excellence Manager, Robert Bosch GmbH

Lasse Finnes Torp Student, NTNU

Riley Uppal Lawyer, The London School of Economics and Political Science

James Wilson Global AI Ethicist, Capgemini

Eric Cohen, Founder&CEO certifying conversational AI safety Inventor Reebok Pump, Harvard mentor, Vero Labs

Russell Collins Founder, the Solomon Project

Dmitry Semadi Junior Developer, Positive

Matthew William Britz 12 year old boy worried about our depressing future as a species

True Mayer Bus Driver, Illinois Central School Bus

Jennifer Chase Mother, San Joaquin River Club, Inc.

Leroy Long 7th grader

Joseph Edward Redmon III

Felipe Cabello Russo Magalhães Silva Engineering student, Universidade Federal de Minas Gerais

Liza Scriggins Artistic Director, StoryMusic

Timothy Yong Student, Nanyang Technological University

Michael J McGuffin Professor, École de technologie supérieure

Samer Hassan Professor of Computer Science, Berkman Klein Center at Harvard University & Universidad Complutense de Madrid

Simón hoffmann Cgo, Gamein fZ lle

Sarthak Kumar

Ethan Ghoul

Alice Rondelli Autrice e ricercatrice, (F)ATTUALE rivista | podcast

Eleanor Beer Director, Hoffmann-Beer Homes

Jason M Redmon

Eric Ezechieli Co-founder, NATIVA

Darrell Mesa Chief AI Officer, Navique Corporation

Brian David Fisher, Ph.D. Professor, Simon Fraser University

Nova Witten-Hannah Student, Green Bay High School

Noah Pillemer Co-Founder, INLP Pickups

Alexander K Chavez CTO, Helpsy

Vágvölgyi Gusztáv közösségfejlesztő, Inspi-Ráció Egyesület

Thibaud Veron Chief of Staff, French Center for AI Safety

Krishna Kavi Emeritus Professor, University of North Texas

Richa Parikh Growth, ETHGlobal

Clayton Oppenheimer Rabbi, HUC

Brendan O’Donoghue Managing Director, Artificial Observer Limited

Victoria Krakovna Cofounder, Future of Life Institute

Nick Shapiro Software Engineer

Ramana Pureti Engineer

László Zalatnay President, Energy and Environment Fundation

Darío Maestro Legal Director, Surveillance Technology Oversight Project

Sab Kanaujia, Co-Founder & CEO Management, Autonomic Health

Dr. Emi Barresi Founder/CEO, HEARTH Leadership

Jonathan Davidson Student, University of North Georgia

Domenico Talia Professor, Università della Calabria

Saphonia Foster Founder, AI for the People

Martha Stone Palmer Professor, University of Colorado Boulder

Niv Sharma Founder, Hollow-Bamboo Innovations

Porf. Dr. Christiane Woopen Director, Center for Life Ethics, University of Bonn

Iñigo lizarribar imaz Ceo, Ctrl+i

Mohab yasser programmer, qbitgroup

Christopher DiCarlo Founder, Critical Thinking Solutions

Rochelle Harris Director, Zeneva Digital

Sr Gail Worcelo Co-founder and President, Sisters of the Earth Community

Billy McConnell Musician etc, Acid Ranger LLC

Brody Lister Undergraduate, California State University Fullerton

Kakani Khasyap Student, Chennai Public School

Daniel Cameron Software Engineer, UL Solutions

Izolda Dr. Takacs International lawyer, Norwegian Centre for Human Right

Daniel Ben Moses 3D modeler, texturer, rigger, animator, writer, director, actor, and game developer

Alexander Mohar Csaky Founder,

JR Redmond Researcher, NYU

Dr. William Leiss Professor, Queen's University (Canada)

Sam Hiner Executive Director, Young People's Alliance

Richard Smith Professor, Simon Fraser University

Duana Fullwiley Professor of Anthropology, Stanford University

Sho Okiyama Founder and CEO, Aillis, Inc.

Ioannis Dermousis Systems Administrator, NCSR DEMOKRITOS - Greece

Zack T Student, George Mason University

Sridhar Ramamoorti Associate Professor of Accounting, University of Dayton

Kimani KJ Camron Calliste Business Designer, KJ Camron

Remi de Wilde MSc Founder, Lombriz Feliz

Jaeson Booker Executive Director, AI Safety Research Fund

Milana Mezhieva Student, Queen Mary University of London

Thomas Ersevim Physics PhD Student, University of California, Berkeley

Matt Dirks Managing Partner, Neralake Inc

Dr. Roberto Orosei Senior researcher, Istituto Nazionale di Astrofisica (National Institute for Astrophysics), Italy

Mulya van Roon Founder, The Responsible AI Center

Sarah Koschny Student, University of Wollongong

Gustaf Graf Software Developer, onyo

James Dashiell

roméo Hennion CEO, GameFlow

Flavio Bordignon President, Dorg Society Foundation

Jaan Tallinn Cofounder, Future of Life Institute

Ramana Kumar former Research Scientist, Google DeepMind

Marco Bani Head of institutional Affiar - Democratic Party, Italian Senate

Steve Omohundro Founder, Beneficial AI Research

Sergio Lopez Figueroa CEO, Polyhum

Julian Torres Lara Artist, Editorial Fatum

Christopher DiCarlo Senior Advisor, Convergence Analysis

Meredith Potter Executive Director, American Security Foundation

Mohammed Hossam Eldin Mechatronics and Robotics Student, Canadian International College

Jud Lein artist

Sloan Kulper, PhD CEO, Lifespans Technologies Pte Ltd

Ihor Ivliev Maker, Texttics

Haider Imam CEO, HI Energy Ventures

Jude Williams Student, ASCTE

Jon Down, PhD Professor of AI and Entrepreneurship, University of Portland

Shane Watson Owner, Shane Watson Music

Tom Higley CEO, X Genesis

Geoff Ralston Founder and Partner, Safe AI Fund (SAIF)

Richard A Schreiber Founder, LawFirmAIExpert

Richard M Georgeoff III Software Engineer, Meta

Tom Grogan Student

Noah Barger Student, Liberty University

Thomas Herbig Chief Research Officer, Just Capital

Kyungho Song Senior Researcher, Korea AISI

Jude Williams Student, ASCTE

José González Artist, José González

Julius Steiglechenr PhD Student, Max Planck Institute for Biological Cybernetics

Gustav Šír, PhD AI researcher, Czech Technical University

Niamh Lenihan Founder and Director, Centre for Digital Ethics

Sophia Andren CTO, candycode

Shirish Bhattarai Product Lead, Trase

Mr. Alan Lewis Director, Sigmatech Analysis

Fabian Finkbeiner Rivera Student, ETHZ

Samuel Martinsson MSc Student, Chalmers University of Technology

Ibai Zarketa Moyua Teacher, Education

Felipe Garcia Projectleader Architect, Tengbom arkitekter

Marleen van Leengoed strategist and ethisist, Values Based Innovation Studio

Jeffrey Golby CEO, WellFunded

Ashanti Ternoir Self, Self

Sarah Koschny Student, University of Wollongong

Dr. Shannon H Doak Founder, IPLNA

Julia Jacobs Founder, New Era Code

Andrea Tidu Student, Università degli Studi di Milano

F. D. Cybersecurity Engineer, Censored

Arnaud Legrand Research Director, CNRS/Inria/Univ. Grenoble Alpes

alexzandria compton Marketing Professional + Designer

Colton O’Bray Graduate student, Kansas State University

Lynn Frederick Dsouza Country Advisory Member, G100 Security & Defence Wing

Linda Michaels Chair and CoFounder, Psychotherapy Action Network

Osmani Redondo Director, AI Safety Spain (IAS)

Noah Pillemer Co-Founder, INLP Pickups

Sachchidanand R. Swami Senior Information Technology (IT)/Software Professional, Critical Thinker, Writer

Zachary Smiley Founder, Illuminant Records

Kushal Agrawal Lead Applied Scientist, Relativity

Maia Fraser Associate Professor, University of Ottawa

Madison Rouzier Student, John Abbott College

Joseph Erdosy Senior Cyber Security Consultant, Ring 0 Cyber Security Consulting

Jeff Hancock Professor, Stanford University

Jenafer Matthews Me, Humanity

Rufo Guerreschi Executive Director, Coalition for a Baruch Plan for AI

Nikita Saxena Research Engineer, Google Deepmind

Jai Jaisimha Co-Founder, Transparency Coalition

Leo Wu Co-Founder, AI Consensus

Sylvain Noel Vice-president, Syntax

Chantal Spenard Retired activist, Synoptik

Eric Leijonmarck Software Engineer, Grafana

Dias Chief Strategy Officer, Wimax international

Etienne Brisson Founder and President, The Human Line Project

Michael Downey Treasurer, FLOSS.social

Jai Jaisimha Co-Founder, Transparency Coalition

Tracey Rogers Brandt // Human Citizen, Caregiver, Steward and Participant Imagineer and Life Affirming Futures Changemaker, Circular Patterns, She's Geeky, AI Edition

Aasia Khanum Professor of Computer Science, Forman Christian College

Susan McP. Ryan Owner, Susan McP. Ryan

Eva Strautmann Artist / Author, Art

Bart Massee Founder, Bart Massee Design

Mehdi Adda Professor, Université du Québec à Rimouski

Matthew McDonnell Hutto Scholar of Life, Ethics, and Knowledge, Independent

Brad Knox Research Associate Professor of Computer Science, University of Texas at Austin

Marc Crompton Educator, St George's School

Harro Lemstra Owner, Redwhiteblue.eu

Angelica Lim, Ph.D. Associate Professor, Simon Fraser University

Ernest Zenko Professor and Director, Centre for Responsible Artificial Intelligence , University of Primorska

Peter Demarest Foundner and CEO, The Institute for Value-Generative AI Inc.

Nathan Sidney Business Coordinator, Tasmanian Land Conservancy

Sheila McIlraith Professor of Computer Science, University of Toronto; Associate Director Schwartz Reisman Institute for Technology and Society.

Daniel Heitzinger ETH Zürich, Student

Takafumi Matsumaru Professor, Waseda University

Richard Smith, PhD Professor, Simon Fraser University

Michael Dobrin IT Manager, Rakuten

Maren Costa Founder, 280m.org

Dr. Jobst Heitzig Senior Scientist, Potsdam Institute for Climate Impact Research

Demetrius Floudas Professor, IKBFU & Leverhulme Centre for the Future of Intelligence, University of Cambridge

Carl Shaw

Victoria Ustimenko CEO & Founder, PRETO BUSINESS Corp.

Todd Davies Academic Research and Program Officer, Stanford University

Mathew Daniel Director, Afkari

Jakub Growiec Professor, SGH Warsaw School of Economics, Poland

Παναγιώτης Ξεκουκουλωτάκης Student

Santiago García Cofounder, Future for Work Institute

Hochang Song Lawyer, former congressman in South Korea, President of Al for All Forum, AI for All forum

Pieter Swart Associate Director, Web Development, Impact.com

Kevin Baum Senior Researcher, Ethicist, German Research Center for Artificial Intelligence (DFKI(

Marta Grau Masip Presdient of Board of Trustees, Alzheimer Catalunya Fundacio

Aasia Khanum Professor of Computer Science, Forman Christian College

Don Tocher Managing Director / IT Consultant, Donline Computer Consultancy Ltd

Andrew Mackay

Alexandre Rispal Partner and Author, HEKZE

Jarrod Humphrey Assistant Professor, Xavier University

em. Univ. Prof. Dr. Robert Wolff retired, University Mozarteum

Benjamin de Seingalt Corporate Counsel, Compliance Officer, and Director of Applied AI, MarketVision Research

Jill Baillargeon Person

Timo Off School Principal & Founder, Agency for Human Affairs (nexus-m.de)

Lee Behrns Principal, Cornerstone Christian School

Eliyahu Elia Ole Medukenya investor, lobore ltd

Prof. Phil Janson Computer Science research & teaching, IBM Research & Ecole Polytechnique Fédérale de Lausanne (retired)

Hochang Song president, former Congressman in South Korea, AI for All Forum

Mr Rowan Harris O’Neil Student

Sean D. Duncan PhD Candidate, Liberty University

Vojtech Cahlik PhD Student, Czech Technical University in Prague

Samuel Smith Software Engineer, Monte Carlo

Pedro Jose Barbeito Gonzalez , Dr Naturwissenschaften retired, Technology College Sarawak

Autumn Gapsis

Rania Masri, PhD Co Director, North Carolina Environmental Justice Network

Julien Rigal-Dupont Créateur d’Expériences Humaines, JRD Experiences

Dr Anne Rooney Author, children's science books and books on the history of science for adults

Kees Kool Product Owner, Sacha Coaching en Training

Vasilis Babouris Director of Studies, Metafrasi Translator Training Centre

Pete McDonnell Academic Librarian, A. C. Clark Library, Bemidji State University, MN, USA

Marcello Ferrante Docente, MIM

Mackyle Conner Wotring Student, Santa Rosa Junior Collect

Barry Schwartz Owner, Barry Schwartz Photographyy

Wayno® Cartoonist, Bizarro/King Features Syndicate

Sue Clancy Artist, this artist studio

Suzanne E. Pena concerned citizen

Saddie Parada Social Worker

Makenna Drake Founder, Reciprocity Music

Dr. Maria Johansson Researcher AI Safety

Prof. dr.MIrcea Bertea President, National Association of Pedagogical Colleges and High Schools (ANCLP)

Thijs Jenneskens campaigner, PauseAI

James Mackay Artist, Freelance

Iris Uderstädt CEO, mindful@work

Dr Wellett Potter Senior Lecturer in Law, University of New England, Australia

Cupatitzio Ramirez Romero Professor, Benemérita Universidad Autónoma de Puebla

The Pro-Human AI Declaration Supplemental Statement

AFL-CIO Tech Institute | March 4, 2026

The AFL-CIO Tech Institute supports the spirit of the Principles of the Pro-Human AI Declaration, shares the conviction that AI must serve people, not the reverse, and affirms the need for:

- Keeping Humans in Charge

- Avoiding Concentration of Power

- Protecting the Human Experience

- Human Agency and Liberty

- Responsibility and Accountability for AI Companies

Additionally, we believe that work is an essential element of human dignity, and must be central to any policy debate around AI. The AFL-CIO’s AI Principles center workers and ensure that the benefits of new technologies are widely shared and do not lead to dangerous, discriminatory and anti-worker outcomes. It is important that we do not concede to the notion that AI adoption is inevitable. We must recognize the limitations that exist for this technology, which does not supersede the knowledge, experience, and hands-on work required for many private and public sector jobs. Workers must have a hand in shaping when and how the technology is developed and deployed to ensure that it improves society, delivers public benefits, and does not lead to the displacement of workers. As rapid technological change is poised to define the future of millions of jobs, it is essential that federal, state, and local leaders champion policies that:

- Strengthen labor rights and broaden opportunities for collective bargaining

- Advance guardrails against harmful uses of AI in the workplace

- Support and promote copyright and intellectual property protections

- Develop a worker-centered workforce development and training system

- Institutionalize worker voice within AI R&D

- Require transparency and accountability in AI applications

- Model best practices for AI use with government procurement

- Protect workers’ civil rights and uphold democratic integrity

The AFL-CIO has adopted principles for fair, safe, responsible and worker-centered AI. We choose a future where progress and opportunity benefit everyone, and where AI isn’t used against us or to weaken the protections that a fair and thriving democracy demands.